Note

Go to the end to download the full example code.

Store and retrieve reports on Skore Hub#

This example shows how to use Project in hub mode: store

reports remotely and inspect them. A key point is that

summarize() returns a Summary,

which is a pandas.DataFrame. In Jupyter you get an interactive widget, but

you can always inspect and filter the summary as a DataFrame if you prefer.

Examples#

To run this example and push in your own Skore Hub workspace and project, you can run this example with the following command:

WORKSPACE=<workspace> PROJECT=<project> python plot_skore_hub_project.py

In this gallery, we are going to push the different reports into a public workspace.

skore can communicate with Skore Hub which serves two main purposes: storing and

retrieving any reports that you created and a user-friendly interface for you to

explore and compare models.

First, we need to login to Skore Hub such that later we can push our reports to it.

╭───────────────────────────────── Login to Skore Hub ─────────────────────────────────╮

│ │

│ Successfully logged in, using API key. │

│ │

╰──────────────────────────────────────────────────────────────────────────────────────╯

To illustrate the integration with Skore Hub, we use a binary classification task where the goal is to predict whether a patient has a tumor or not.

import numpy as np

import skrub

from sklearn.datasets import load_breast_cancer

X, y = load_breast_cancer(return_X_y=True, as_frame=True)

labels = np.array(["no tumor", "tumor"], dtype=object)

y = labels[y]

skrub.TableReport(X)

| mean radius | mean texture | mean perimeter | mean area | mean smoothness | mean compactness | mean concavity | mean concave points | mean symmetry | mean fractal dimension | radius error | texture error | perimeter error | area error | smoothness error | compactness error | concavity error | concave points error | symmetry error | fractal dimension error | worst radius | worst texture | worst perimeter | worst area | worst smoothness | worst compactness | worst concavity | worst concave points | worst symmetry | worst fractal dimension | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 18.0 | 10.4 | 123. | 1.00e+03 | 0.118 | 0.278 | 0.300 | 0.147 | 0.242 | 0.0787 | 1.09 | 0.905 | 8.59 | 153. | 0.00640 | 0.0490 | 0.0537 | 0.0159 | 0.0300 | 0.00619 | 25.4 | 17.3 | 185. | 2.02e+03 | 0.162 | 0.666 | 0.712 | 0.265 | 0.460 | 0.119 |

| 1 | 20.6 | 17.8 | 133. | 1.33e+03 | 0.0847 | 0.0786 | 0.0869 | 0.0702 | 0.181 | 0.0567 | 0.543 | 0.734 | 3.40 | 74.1 | 0.00522 | 0.0131 | 0.0186 | 0.0134 | 0.0139 | 0.00353 | 25.0 | 23.4 | 159. | 1.96e+03 | 0.124 | 0.187 | 0.242 | 0.186 | 0.275 | 0.0890 |

| 2 | 19.7 | 21.2 | 130. | 1.20e+03 | 0.110 | 0.160 | 0.197 | 0.128 | 0.207 | 0.0600 | 0.746 | 0.787 | 4.58 | 94.0 | 0.00615 | 0.0401 | 0.0383 | 0.0206 | 0.0225 | 0.00457 | 23.6 | 25.5 | 152. | 1.71e+03 | 0.144 | 0.424 | 0.450 | 0.243 | 0.361 | 0.0876 |

| 3 | 11.4 | 20.4 | 77.6 | 386. | 0.142 | 0.284 | 0.241 | 0.105 | 0.260 | 0.0974 | 0.496 | 1.16 | 3.44 | 27.2 | 0.00911 | 0.0746 | 0.0566 | 0.0187 | 0.0596 | 0.00921 | 14.9 | 26.5 | 98.9 | 568. | 0.210 | 0.866 | 0.687 | 0.258 | 0.664 | 0.173 |

| 4 | 20.3 | 14.3 | 135. | 1.30e+03 | 0.100 | 0.133 | 0.198 | 0.104 | 0.181 | 0.0588 | 0.757 | 0.781 | 5.44 | 94.4 | 0.0115 | 0.0246 | 0.0569 | 0.0188 | 0.0176 | 0.00511 | 22.5 | 16.7 | 152. | 1.58e+03 | 0.137 | 0.205 | 0.400 | 0.163 | 0.236 | 0.0768 |

| 564 | 21.6 | 22.4 | 142. | 1.48e+03 | 0.111 | 0.116 | 0.244 | 0.139 | 0.173 | 0.0562 | 1.18 | 1.26 | 7.67 | 159. | 0.0103 | 0.0289 | 0.0520 | 0.0245 | 0.0111 | 0.00424 | 25.4 | 26.4 | 166. | 2.03e+03 | 0.141 | 0.211 | 0.411 | 0.222 | 0.206 | 0.0712 |

| 565 | 20.1 | 28.2 | 131. | 1.26e+03 | 0.0978 | 0.103 | 0.144 | 0.0979 | 0.175 | 0.0553 | 0.765 | 2.46 | 5.20 | 99.0 | 0.00577 | 0.0242 | 0.0395 | 0.0168 | 0.0190 | 0.00250 | 23.7 | 38.2 | 155. | 1.73e+03 | 0.117 | 0.192 | 0.322 | 0.163 | 0.257 | 0.0664 |

| 566 | 16.6 | 28.1 | 108. | 858. | 0.0846 | 0.102 | 0.0925 | 0.0530 | 0.159 | 0.0565 | 0.456 | 1.07 | 3.42 | 48.5 | 0.00590 | 0.0373 | 0.0473 | 0.0156 | 0.0132 | 0.00389 | 19.0 | 34.1 | 127. | 1.12e+03 | 0.114 | 0.309 | 0.340 | 0.142 | 0.222 | 0.0782 |

| 567 | 20.6 | 29.3 | 140. | 1.26e+03 | 0.118 | 0.277 | 0.351 | 0.152 | 0.240 | 0.0702 | 0.726 | 1.59 | 5.77 | 86.2 | 0.00652 | 0.0616 | 0.0712 | 0.0166 | 0.0232 | 0.00619 | 25.7 | 39.4 | 185. | 1.82e+03 | 0.165 | 0.868 | 0.939 | 0.265 | 0.409 | 0.124 |

| 568 | 7.76 | 24.5 | 47.9 | 181. | 0.0526 | 0.0436 | 0.00 | 0.00 | 0.159 | 0.0588 | 0.386 | 1.43 | 2.55 | 19.1 | 0.00719 | 0.00466 | 0.00 | 0.00 | 0.0268 | 0.00278 | 9.46 | 30.4 | 59.2 | 269. | 0.0900 | 0.0644 | 0.00 | 0.00 | 0.287 | 0.0704 |

mean radius

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

456 (80.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 14.1 ± 3.52

- Median ± IQR

- 13.4 ± 4.08

- Min | Max

- 6.98 | 28.1

mean texture

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

479 (84.2%)

This column has a high cardinality (> 40).

- Mean ± Std

- 19.3 ± 4.30

- Median ± IQR

- 18.8 ± 5.63

- Min | Max

- 9.71 | 39.3

mean perimeter

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

522 (91.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 92.0 ± 24.3

- Median ± IQR

- 86.2 ± 28.9

- Min | Max

- 43.8 | 188.

mean area

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

539 (94.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 655. ± 352.

- Median ± IQR

- 551. ± 362.

- Min | Max

- 144. | 2.50e+03

mean smoothness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

474 (83.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0964 ± 0.0141

- Median ± IQR

- 0.0959 ± 0.0189

- Min | Max

- 0.0526 | 0.163

mean compactness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

537 (94.4%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.104 ± 0.0528

- Median ± IQR

- 0.0926 ± 0.0655

- Min | Max

- 0.0194 | 0.345

mean concavity

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

537 (94.4%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0888 ± 0.0797

- Median ± IQR

- 0.0615 ± 0.101

- Min | Max

- 0.00 | 0.427

mean concave points

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

542 (95.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0489 ± 0.0388

- Median ± IQR

- 0.0335 ± 0.0537

- Min | Max

- 0.00 | 0.201

mean symmetry

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

432 (75.9%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.181 ± 0.0274

- Median ± IQR

- 0.179 ± 0.0338

- Min | Max

- 0.106 | 0.304

mean fractal dimension

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

499 (87.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0628 ± 0.00706

- Median ± IQR

- 0.0615 ± 0.00842

- Min | Max

- 0.0500 | 0.0974

radius error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

540 (94.9%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.405 ± 0.277

- Median ± IQR

- 0.324 ± 0.246

- Min | Max

- 0.112 | 2.87

texture error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

519 (91.2%)

This column has a high cardinality (> 40).

- Mean ± Std

- 1.22 ± 0.552

- Median ± IQR

- 1.11 ± 0.640

- Min | Max

- 0.360 | 4.88

perimeter error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

533 (93.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 2.87 ± 2.02

- Median ± IQR

- 2.29 ± 1.75

- Min | Max

- 0.757 | 22.0

area error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

528 (92.8%)

This column has a high cardinality (> 40).

- Mean ± Std

- 40.3 ± 45.5

- Median ± IQR

- 24.5 ± 27.3

- Min | Max

- 6.80 | 542.

smoothness error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

547 (96.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.00704 ± 0.00300

- Median ± IQR

- 0.00638 ± 0.00298

- Min | Max

- 0.00171 | 0.0311

compactness error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

541 (95.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0255 ± 0.0179

- Median ± IQR

- 0.0204 ± 0.0194

- Min | Max

- 0.00225 | 0.135

concavity error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

533 (93.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0319 ± 0.0302

- Median ± IQR

- 0.0259 ± 0.0270

- Min | Max

- 0.00 | 0.396

concave points error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

507 (89.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0118 ± 0.00617

- Median ± IQR

- 0.0109 ± 0.00707

- Min | Max

- 0.00 | 0.0528

symmetry error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

498 (87.5%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0205 ± 0.00827

- Median ± IQR

- 0.0187 ± 0.00832

- Min | Max

- 0.00788 | 0.0790

fractal dimension error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

545 (95.8%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.00379 ± 0.00265

- Median ± IQR

- 0.00319 ± 0.00231

- Min | Max

- 0.000895 | 0.0298

worst radius

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

457 (80.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 16.3 ± 4.83

- Median ± IQR

- 15.0 ± 5.78

- Min | Max

- 7.93 | 36.0

worst texture

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

511 (89.8%)

This column has a high cardinality (> 40).

- Mean ± Std

- 25.7 ± 6.15

- Median ± IQR

- 25.4 ± 8.64

- Min | Max

- 12.0 | 49.5

worst perimeter

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

514 (90.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 107. ± 33.6

- Median ± IQR

- 97.7 ± 41.3

- Min | Max

- 50.4 | 251.

worst area

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

544 (95.6%)

This column has a high cardinality (> 40).

- Mean ± Std

- 881. ± 569.

- Median ± IQR

- 686. ± 569.

- Min | Max

- 185. | 4.25e+03

worst smoothness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

411 (72.2%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.132 ± 0.0228

- Median ± IQR

- 0.131 ± 0.0294

- Min | Max

- 0.0712 | 0.223

worst compactness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

529 (93.0%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.254 ± 0.157

- Median ± IQR

- 0.212 ± 0.192

- Min | Max

- 0.0273 | 1.06

worst concavity

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

539 (94.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.272 ± 0.209

- Median ± IQR

- 0.227 ± 0.268

- Min | Max

- 0.00 | 1.25

worst concave points

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

492 (86.5%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.115 ± 0.0657

- Median ± IQR

- 0.0999 ± 0.0965

- Min | Max

- 0.00 | 0.291

worst symmetry

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

500 (87.9%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.290 ± 0.0619

- Median ± IQR

- 0.282 ± 0.0675

- Min | Max

- 0.157 | 0.664

worst fractal dimension

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

535 (94.0%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0839 ± 0.0181

- Median ± IQR

- 0.0800 ± 0.0206

- Min | Max

- 0.0550 | 0.207

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

|

Column

|

Column name

|

dtype

|

Is sorted

|

Null values

|

Unique values

|

Mean

|

Std

|

Min

|

Median

|

Max

|

|---|---|---|---|---|---|---|---|---|---|---|

| 0 | mean radius | Float64DType | False | 0 (0.0%) | 456 (80.1%) | 14.1 | 3.52 | 6.98 | 13.4 | 28.1 |

| 1 | mean texture | Float64DType | False | 0 (0.0%) | 479 (84.2%) | 19.3 | 4.30 | 9.71 | 18.8 | 39.3 |

| 2 | mean perimeter | Float64DType | False | 0 (0.0%) | 522 (91.7%) | 92.0 | 24.3 | 43.8 | 86.2 | 188. |

| 3 | mean area | Float64DType | False | 0 (0.0%) | 539 (94.7%) | 655. | 352. | 144. | 551. | 2.50e+03 |

| 4 | mean smoothness | Float64DType | False | 0 (0.0%) | 474 (83.3%) | 0.0964 | 0.0141 | 0.0526 | 0.0959 | 0.163 |

| 5 | mean compactness | Float64DType | False | 0 (0.0%) | 537 (94.4%) | 0.104 | 0.0528 | 0.0194 | 0.0926 | 0.345 |

| 6 | mean concavity | Float64DType | False | 0 (0.0%) | 537 (94.4%) | 0.0888 | 0.0797 | 0.00 | 0.0615 | 0.427 |

| 7 | mean concave points | Float64DType | False | 0 (0.0%) | 542 (95.3%) | 0.0489 | 0.0388 | 0.00 | 0.0335 | 0.201 |

| 8 | mean symmetry | Float64DType | False | 0 (0.0%) | 432 (75.9%) | 0.181 | 0.0274 | 0.106 | 0.179 | 0.304 |

| 9 | mean fractal dimension | Float64DType | False | 0 (0.0%) | 499 (87.7%) | 0.0628 | 0.00706 | 0.0500 | 0.0615 | 0.0974 |

| 10 | radius error | Float64DType | False | 0 (0.0%) | 540 (94.9%) | 0.405 | 0.277 | 0.112 | 0.324 | 2.87 |

| 11 | texture error | Float64DType | False | 0 (0.0%) | 519 (91.2%) | 1.22 | 0.552 | 0.360 | 1.11 | 4.88 |

| 12 | perimeter error | Float64DType | False | 0 (0.0%) | 533 (93.7%) | 2.87 | 2.02 | 0.757 | 2.29 | 22.0 |

| 13 | area error | Float64DType | False | 0 (0.0%) | 528 (92.8%) | 40.3 | 45.5 | 6.80 | 24.5 | 542. |

| 14 | smoothness error | Float64DType | False | 0 (0.0%) | 547 (96.1%) | 0.00704 | 0.00300 | 0.00171 | 0.00638 | 0.0311 |

| 15 | compactness error | Float64DType | False | 0 (0.0%) | 541 (95.1%) | 0.0255 | 0.0179 | 0.00225 | 0.0204 | 0.135 |

| 16 | concavity error | Float64DType | False | 0 (0.0%) | 533 (93.7%) | 0.0319 | 0.0302 | 0.00 | 0.0259 | 0.396 |

| 17 | concave points error | Float64DType | False | 0 (0.0%) | 507 (89.1%) | 0.0118 | 0.00617 | 0.00 | 0.0109 | 0.0528 |

| 18 | symmetry error | Float64DType | False | 0 (0.0%) | 498 (87.5%) | 0.0205 | 0.00827 | 0.00788 | 0.0187 | 0.0790 |

| 19 | fractal dimension error | Float64DType | False | 0 (0.0%) | 545 (95.8%) | 0.00379 | 0.00265 | 0.000895 | 0.00319 | 0.0298 |

| 20 | worst radius | Float64DType | False | 0 (0.0%) | 457 (80.3%) | 16.3 | 4.83 | 7.93 | 15.0 | 36.0 |

| 21 | worst texture | Float64DType | False | 0 (0.0%) | 511 (89.8%) | 25.7 | 6.15 | 12.0 | 25.4 | 49.5 |

| 22 | worst perimeter | Float64DType | False | 0 (0.0%) | 514 (90.3%) | 107. | 33.6 | 50.4 | 97.7 | 251. |

| 23 | worst area | Float64DType | False | 0 (0.0%) | 544 (95.6%) | 881. | 569. | 185. | 686. | 4.25e+03 |

| 24 | worst smoothness | Float64DType | False | 0 (0.0%) | 411 (72.2%) | 0.132 | 0.0228 | 0.0712 | 0.131 | 0.223 |

| 25 | worst compactness | Float64DType | False | 0 (0.0%) | 529 (93.0%) | 0.254 | 0.157 | 0.0273 | 0.212 | 1.06 |

| 26 | worst concavity | Float64DType | False | 0 (0.0%) | 539 (94.7%) | 0.272 | 0.209 | 0.00 | 0.227 | 1.25 |

| 27 | worst concave points | Float64DType | False | 0 (0.0%) | 492 (86.5%) | 0.115 | 0.0657 | 0.00 | 0.0999 | 0.291 |

| 28 | worst symmetry | Float64DType | False | 0 (0.0%) | 500 (87.9%) | 0.290 | 0.0619 | 0.157 | 0.282 | 0.664 |

| 29 | worst fractal dimension | Float64DType | False | 0 (0.0%) | 535 (94.0%) | 0.0839 | 0.0181 | 0.0550 | 0.0800 | 0.207 |

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

mean radius

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

456 (80.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 14.1 ± 3.52

- Median ± IQR

- 13.4 ± 4.08

- Min | Max

- 6.98 | 28.1

mean texture

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

479 (84.2%)

This column has a high cardinality (> 40).

- Mean ± Std

- 19.3 ± 4.30

- Median ± IQR

- 18.8 ± 5.63

- Min | Max

- 9.71 | 39.3

mean perimeter

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

522 (91.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 92.0 ± 24.3

- Median ± IQR

- 86.2 ± 28.9

- Min | Max

- 43.8 | 188.

mean area

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

539 (94.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 655. ± 352.

- Median ± IQR

- 551. ± 362.

- Min | Max

- 144. | 2.50e+03

mean smoothness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

474 (83.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0964 ± 0.0141

- Median ± IQR

- 0.0959 ± 0.0189

- Min | Max

- 0.0526 | 0.163

mean compactness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

537 (94.4%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.104 ± 0.0528

- Median ± IQR

- 0.0926 ± 0.0655

- Min | Max

- 0.0194 | 0.345

mean concavity

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

537 (94.4%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0888 ± 0.0797

- Median ± IQR

- 0.0615 ± 0.101

- Min | Max

- 0.00 | 0.427

mean concave points

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

542 (95.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0489 ± 0.0388

- Median ± IQR

- 0.0335 ± 0.0537

- Min | Max

- 0.00 | 0.201

mean symmetry

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

432 (75.9%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.181 ± 0.0274

- Median ± IQR

- 0.179 ± 0.0338

- Min | Max

- 0.106 | 0.304

mean fractal dimension

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

499 (87.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0628 ± 0.00706

- Median ± IQR

- 0.0615 ± 0.00842

- Min | Max

- 0.0500 | 0.0974

radius error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

540 (94.9%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.405 ± 0.277

- Median ± IQR

- 0.324 ± 0.246

- Min | Max

- 0.112 | 2.87

texture error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

519 (91.2%)

This column has a high cardinality (> 40).

- Mean ± Std

- 1.22 ± 0.552

- Median ± IQR

- 1.11 ± 0.640

- Min | Max

- 0.360 | 4.88

perimeter error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

533 (93.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 2.87 ± 2.02

- Median ± IQR

- 2.29 ± 1.75

- Min | Max

- 0.757 | 22.0

area error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

528 (92.8%)

This column has a high cardinality (> 40).

- Mean ± Std

- 40.3 ± 45.5

- Median ± IQR

- 24.5 ± 27.3

- Min | Max

- 6.80 | 542.

smoothness error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

547 (96.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.00704 ± 0.00300

- Median ± IQR

- 0.00638 ± 0.00298

- Min | Max

- 0.00171 | 0.0311

compactness error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

541 (95.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0255 ± 0.0179

- Median ± IQR

- 0.0204 ± 0.0194

- Min | Max

- 0.00225 | 0.135

concavity error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

533 (93.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0319 ± 0.0302

- Median ± IQR

- 0.0259 ± 0.0270

- Min | Max

- 0.00 | 0.396

concave points error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

507 (89.1%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0118 ± 0.00617

- Median ± IQR

- 0.0109 ± 0.00707

- Min | Max

- 0.00 | 0.0528

symmetry error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

498 (87.5%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0205 ± 0.00827

- Median ± IQR

- 0.0187 ± 0.00832

- Min | Max

- 0.00788 | 0.0790

fractal dimension error

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

545 (95.8%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.00379 ± 0.00265

- Median ± IQR

- 0.00319 ± 0.00231

- Min | Max

- 0.000895 | 0.0298

worst radius

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

457 (80.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 16.3 ± 4.83

- Median ± IQR

- 15.0 ± 5.78

- Min | Max

- 7.93 | 36.0

worst texture

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

511 (89.8%)

This column has a high cardinality (> 40).

- Mean ± Std

- 25.7 ± 6.15

- Median ± IQR

- 25.4 ± 8.64

- Min | Max

- 12.0 | 49.5

worst perimeter

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

514 (90.3%)

This column has a high cardinality (> 40).

- Mean ± Std

- 107. ± 33.6

- Median ± IQR

- 97.7 ± 41.3

- Min | Max

- 50.4 | 251.

worst area

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

544 (95.6%)

This column has a high cardinality (> 40).

- Mean ± Std

- 881. ± 569.

- Median ± IQR

- 686. ± 569.

- Min | Max

- 185. | 4.25e+03

worst smoothness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

411 (72.2%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.132 ± 0.0228

- Median ± IQR

- 0.131 ± 0.0294

- Min | Max

- 0.0712 | 0.223

worst compactness

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

529 (93.0%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.254 ± 0.157

- Median ± IQR

- 0.212 ± 0.192

- Min | Max

- 0.0273 | 1.06

worst concavity

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

539 (94.7%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.272 ± 0.209

- Median ± IQR

- 0.227 ± 0.268

- Min | Max

- 0.00 | 1.25

worst concave points

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

492 (86.5%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.115 ± 0.0657

- Median ± IQR

- 0.0999 ± 0.0965

- Min | Max

- 0.00 | 0.291

worst symmetry

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

500 (87.9%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.290 ± 0.0619

- Median ± IQR

- 0.282 ± 0.0675

- Min | Max

- 0.157 | 0.664

worst fractal dimension

Float64DType- Null values

- 0 (0.0%)

- Unique values

-

535 (94.0%)

This column has a high cardinality (> 40).

- Mean ± Std

- 0.0839 ± 0.0181

- Median ± IQR

- 0.0800 ± 0.0206

- Min | Max

- 0.0550 | 0.207

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

| Column 1 | Column 2 | Cramér's V | Pearson's Correlation |

|---|---|---|---|

| mean radius | mean perimeter | 0.846 | 0.998 |

| mean radius | mean area | 0.800 | 0.987 |

| radius error | perimeter error | 0.768 | 0.973 |

| worst radius | worst perimeter | 0.757 | 0.994 |

| mean perimeter | mean area | 0.754 | 0.987 |

| radius error | area error | 0.732 | 0.952 |

| area error | worst area | 0.699 | 0.811 |

| perimeter error | area error | 0.687 | 0.938 |

| worst radius | worst area | 0.676 | 0.984 |

| worst perimeter | worst area | 0.662 | 0.978 |

| mean area | area error | 0.652 | 0.800 |

| mean perimeter | worst radius | 0.643 | 0.969 |

| mean area | worst radius | 0.640 | 0.963 |

| mean radius | worst radius | 0.635 | 0.970 |

| concavity error | concave points error | 0.628 | 0.772 |

| mean radius | area error | 0.626 | 0.736 |

| concavity error | fractal dimension error | 0.610 | 0.727 |

| worst compactness | worst concavity | 0.599 | 0.892 |

| mean area | worst perimeter | 0.596 | 0.959 |

| mean perimeter | area error | 0.594 | 0.745 |

Please enable javascript

The skrub table reports need javascript to display correctly. If you are displaying a report in a Jupyter notebook and you see this message, you may need to re-execute the cell or to trust the notebook (button on the top right or "File > Trust notebook").

Store reports on Skore Hub#

On this problem, we use a logistic regression classifier with skrub’s

tabular_pipeline() to preprocess the data if needed.

To send several reports to Skore Hub, we send models with different regularization parameters.

from numpy import logspace

from sklearn.linear_model import LogisticRegression

from skore import Project, evaluate

project = Project(f"{WORKSPACE}/{PROJECT}", mode="hub")

for regularization in logspace(-3, 3, 5):

project.put(

f"lr-regularization-{regularization:.1e}",

evaluate(

skrub.tabular_pipeline(LogisticRegression(C=regularization)),

X,

y,

splitter=0.2,

pos_label="tumor",

),

)

Putting lr-regularization-1.0e-03 0:00:35

Consult your report at

https://skore.probabl.ai/skore/example-skore-hub-project-dev/estimators/8701

Putting lr-regularization-3.2e-02 0:00:35

Consult your report at

https://skore.probabl.ai/skore/example-skore-hub-project-dev/estimators/8702

Putting lr-regularization-1.0e+00 0:00:37

Consult your report at

https://skore.probabl.ai/skore/example-skore-hub-project-dev/estimators/8703

Putting lr-regularization-3.2e+01 0:00:35

Consult your report at

https://skore.probabl.ai/skore/example-skore-hub-project-dev/estimators/8704

Putting lr-regularization-1.0e+03 0:00:35

Consult your report at

https://skore.probabl.ai/skore/example-skore-hub-project-dev/estimators/8705

Retrieve report stored on Skore Hub#

Retrieving a report on Skore Hub is similar to retrieving a report in local mode.

summarize() returns a Summary,

which subclasses pandas.DataFrame. In a Jupyter environment it renders

an interactive parallel-coordinates widget by default.

summary = project.summarize()

To see the normal DataFrame table instead of the widget (e.g. in scripts or

when you prefer the table), wrap the summary in pandas.DataFrame:

import pandas as pd

pandas_summary = pd.DataFrame(summary)

pandas_summary

| key | date | learner | ml_task | report_type | dataset | rmse | log_loss | roc_auc | fit_time | predict_time | rmse_mean | log_loss_mean | roc_auc_mean | fit_time_mean | predict_time_mean | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||||

| 0 | skore:report:estimator:8701 | lr-regularization-1.0e-03 | 2026-04-29T16:07:11.000776+00:00 | LogisticRegression | binary-classification | estimator | 7887e234e3f622242e475e3da0cb5837 | None | 0.406397 | 0.987298 | 0.055146 | 0.034120 | None | None | None | None | None |

| 1 | skore:report:estimator:8702 | lr-regularization-3.2e-02 | 2026-04-29T16:07:46.872929+00:00 | LogisticRegression | binary-classification | estimator | 7887e234e3f622242e475e3da0cb5837 | None | 0.137499 | 0.995237 | 0.055377 | 0.033355 | None | None | None | None | None |

| 2 | skore:report:estimator:8703 | lr-regularization-1.0e+00 | 2026-04-29T16:08:24.393737+00:00 | LogisticRegression | binary-classification | estimator | 7887e234e3f622242e475e3da0cb5837 | None | 0.080457 | 0.995554 | 0.054876 | 0.032621 | None | None | None | None | None |

| 3 | skore:report:estimator:8704 | lr-regularization-3.2e+01 | 2026-04-29T16:09:00.004222+00:00 | LogisticRegression | binary-classification | estimator | 7887e234e3f622242e475e3da0cb5837 | None | 0.127249 | 0.992061 | 0.057464 | 0.032930 | None | None | None | None | None |

| 4 | skore:report:estimator:8705 | lr-regularization-1.0e+03 | 2026-04-29T16:09:35.496019+00:00 | LogisticRegression | binary-classification | estimator | 7887e234e3f622242e475e3da0cb5837 | None | 0.249399 | 0.990156 | 0.059721 | 0.033125 | None | None | None | None | None |

Basically, our summary contains metadata related to various information that we need to quickly help filtering the reports.

<class 'skore._project._summary.Summary'>

MultiIndex: 5 entries, (0, 'skore:report:estimator:8701') to (4, 'skore:report:estimator:8705')

Data columns (total 16 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 key 5 non-null object

1 date 5 non-null object

2 learner 5 non-null category

3 ml_task 5 non-null object

4 report_type 5 non-null object

5 dataset 5 non-null object

6 rmse 0 non-null object

7 log_loss 5 non-null float64

8 roc_auc 5 non-null float64

9 fit_time 5 non-null float64

10 predict_time 5 non-null float64

11 rmse_mean 0 non-null object

12 log_loss_mean 0 non-null object

13 roc_auc_mean 0 non-null object

14 fit_time_mean 0 non-null object

15 predict_time_mean 0 non-null object

dtypes: category(1), float64(4), object(11)

memory usage: 1.1+ KB

Filter reports by metric (e.g. keep only those above a given accuracy) and work with the result as a table.

summary.query("log_loss < 0.2")["key"].tolist()

['lr-regularization-3.2e-02', 'lr-regularization-1.0e+00', 'lr-regularization-3.2e+01']

Use reports() to load the corresponding

reports from the project (optionally after filtering the summary).

reports = summary.query("log_loss < 0.2").reports(return_as="comparison")

len(reports.reports_)

3

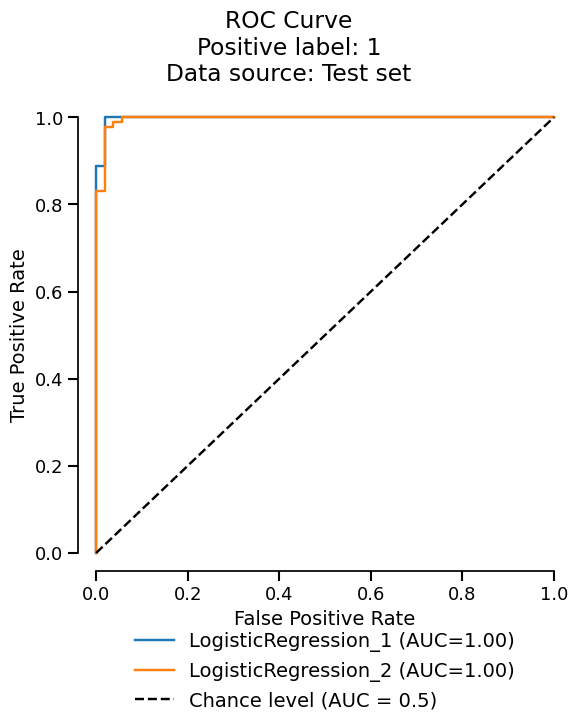

Since we got a ComparisonReport, we can use the metrics accessor

to summarize the metrics across the reports.

reports.metrics.summarize().frame()

| Estimator | LogisticRegression_1 | LogisticRegression_2 | LogisticRegression_3 |

|---|---|---|---|

| Metric | |||

| Accuracy | 0.956140 | 0.964912 | 0.947368 |

| Precision | 0.930556 | 0.970149 | 0.955224 |

| Recall | 1.000000 | 0.970149 | 0.955224 |

| ROC AUC | 0.995237 | 0.995554 | 0.992061 |

| Log loss | 0.137499 | 0.080457 | 0.127249 |

| Brier score | 0.035253 | 0.025149 | 0.029948 |

| Fit time (s) | 0.055377 | 0.054876 | 0.057464 |

| Predict time (s) | 0.033313 | 0.032726 | 0.032974 |

Conclusion#

Skore Hub provides a user-friendly interface for you to explore and compare models. You can easily store reports created using Skore.

Total running time of the script: (3 minutes 13.212 seconds)