Note

Go to the end to download the full example code.

EstimatorReport: Get insights from any scikit-learn estimator#

This example shows how the skore.EstimatorReport class can be used to

quickly get insights from any scikit-learn estimator.

Loading our dataset and defining our estimator#

First, we load a dataset from skrub. Our goal is to predict if a healthcare manufacturing companies paid a medical doctors or hospitals, in order to detect potential conflict of interest.

from skrub.datasets import fetch_open_payments

dataset = fetch_open_payments()

df = dataset.X

y = dataset.y

Downloading 'open_payments' from https://github.com/skrub-data/skrub-data-files/raw/refs/heads/main/open_payments.zip (attempt 1/3)

from skrub import TableReport

TableReport(df)

| Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | Dispute_Status_for_Publication | Name_of_Associated_Covered_Device_or_Medical_Supply1 | Name_of_Associated_Covered_Drug_or_Biological1 | Physician_Specialty | |

|---|---|---|---|---|---|

| 0 | ELI LILLY AND COMPANY | No | Allopathic & Osteopathic Physicians|Pediatrics|Pediatric Rheumatology | ||

| 1 | ELI LILLY AND COMPANY | No | Allopathic & Osteopathic Physicians|Internal Medicine|Nephrology | ||

| 2 | ELI LILLY AND COMPANY | No | Allopathic & Osteopathic Physicians|Internal Medicine|Rheumatology | ||

| 3 | ELI LILLY AND COMPANY | No | Allopathic & Osteopathic Physicians|Internal Medicine|Endocrinology, Diabetes & Metabolism | ||

| 4 | ELI LILLY AND COMPANY | No | EFFIENT | Allopathic & Osteopathic Physicians|Pediatrics|Pediatric Hematology-Oncology | |

| 73,553 | GlaxoSmithKline, LLC. | No | ZIAGEN | ||

| 73,554 | ALERE SCARBOROUGH, INC. | No | Alere PBP2a | ||

| 73,555 | NovoCure Limited | No | |||

| 73,556 | Wright Medical Technology, Inc. | No | HIPS | ||

| 73,557 | Alcon Research Ltd | No | Express |

Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name

ObjectDType- Null values

- 0 (0.0%)

- Unique values

-

1,466 (2.0%)

This column has a high cardinality (> 40).

Most frequent values

Merck Sharp & Dohme Corporation

Novartis Pharmaceuticals Corporation

Pfizer Inc.

Boston Scientific Corporation

Covidien Sales LLC

Stryker Corporation

SANOFI-AVENTIS U.S. LLC

AstraZeneca Pharmaceuticals LP

Genentech USA, Inc.

List:AbbVie, Inc.

['Merck Sharp & Dohme Corporation', 'Novartis Pharmaceuticals Corporation', 'Pfizer Inc.', 'Boston Scientific Corporation', 'Covidien Sales LLC', 'Stryker Corporation', 'SANOFI-AVENTIS U.S. LLC', 'AstraZeneca Pharmaceuticals LP', 'Genentech USA, Inc.', 'AbbVie, Inc.']

Dispute_Status_for_Publication

ObjectDType- Null values

- 0 (0.0%)

- Unique values

- 2 (< 0.1%)

Most frequent values

No

Yes

['No', 'Yes']

Name_of_Associated_Covered_Device_or_Medical_Supply1

ObjectDType- Null values

- 43,088 (58.6%)

- Unique values

-

4,372 (5.9%)

This column has a high cardinality (> 40).

Most frequent values

Vascular

Spine

ARTHREX PRODUCT LINE DISTAL EXTREMITY ARTHROSCOPY

Surgical

ALL ARTHREX PRODUCT LINES

LifeVest

Spinal Cord Neurostimulation - Neuro

Da Vinci Surgical System

PAIN MANAGEMENT

List:Interventional Therapies

['Vascular', 'Spine', 'ARTHREX PRODUCT LINE DISTAL EXTREMITY ARTHROSCOPY', 'Surgical', 'ALL ARTHREX PRODUCT LINES', 'LifeVest', 'Spinal Cord Neurostimulation - Neuro', 'Da Vinci Surgical System', 'PAIN MANAGEMENT', 'Interventional Therapies']

Name_of_Associated_Covered_Drug_or_Biological1

ObjectDType- Null values

- 36,233 (49.3%)

- Unique values

-

2,262 (3.1%)

This column has a high cardinality (> 40).

Most frequent values

Invokana

Xarelto

NON-PRODUCT

Prolia

BUTRANS

NON BRAND

No Product

Nesina

ELIQUIS

List:Zytiga

['Invokana', 'Xarelto', 'NON-PRODUCT', 'Prolia', 'BUTRANS', 'NON BRAND', 'No Product', 'Nesina', 'ELIQUIS', 'Zytiga']

Physician_Specialty

ObjectDType- Null values

- 3,996 (5.4%)

- Unique values

-

513 (0.7%)

This column has a high cardinality (> 40).

Most frequent values

Allopathic & Osteopathic Physicians|Internal Medicine

Other Service Providers|Specialist

Allopathic & Osteopathic Physicians|Surgery

Allopathic & Osteopathic Physicians|Family Medicine

Allopathic & Osteopathic Physicians|Orthopaedic Surgery

Allopathic & Osteopathic Physicians|Internal Medicine|Cardiovascular Disease

Allopathic & Osteopathic Physicians|Pediatrics

Allopathic & Osteopathic Physicians|Radiology|Diagnostic Radiology

Allopathic & Osteopathic Physicians|Obstetrics & Gynecology

List:Student, Health Care|Student in an Organized Health Care Education/Training Program

['Allopathic & Osteopathic Physicians|Internal Medicine', 'Other Service Providers|Specialist', 'Allopathic & Osteopathic Physicians|Surgery', 'Allopathic & Osteopathic Physicians|Family Medicine', 'Allopathic & Osteopathic Physicians|Orthopaedic Surgery', 'Allopathic & Osteopathic Physicians|Internal Medicine|Cardiovascular Disease', 'Allopathic & Osteopathic Physicians|Pediatrics', 'Allopathic & Osteopathic Physicians|Radiology|Diagnostic Radiology', 'Allopathic & Osteopathic Physicians|Obstetrics & Gynecology', 'Student, Health Care|Student in an Organized Health Care Education/Training Program']

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

|

Column

|

Column name

|

dtype

|

Is sorted

|

Null values

|

Unique values

|

Mean

|

Std

|

Min

|

Median

|

Max

|

|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | ObjectDType | False | 0 (0.0%) | 1466 (2.0%) | |||||

| 1 | Dispute_Status_for_Publication | ObjectDType | False | 0 (0.0%) | 2 (< 0.1%) | |||||

| 2 | Name_of_Associated_Covered_Device_or_Medical_Supply1 | ObjectDType | False | 43088 (58.6%) | 4372 (5.9%) | |||||

| 3 | Name_of_Associated_Covered_Drug_or_Biological1 | ObjectDType | False | 36233 (49.3%) | 2262 (3.1%) | |||||

| 4 | Physician_Specialty | ObjectDType | False | 3996 (5.4%) | 513 (0.7%) |

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name

ObjectDType- Null values

- 0 (0.0%)

- Unique values

-

1,466 (2.0%)

This column has a high cardinality (> 40).

Most frequent values

Merck Sharp & Dohme Corporation

Novartis Pharmaceuticals Corporation

Pfizer Inc.

Boston Scientific Corporation

Covidien Sales LLC

Stryker Corporation

SANOFI-AVENTIS U.S. LLC

AstraZeneca Pharmaceuticals LP

Genentech USA, Inc.

List:AbbVie, Inc.

['Merck Sharp & Dohme Corporation', 'Novartis Pharmaceuticals Corporation', 'Pfizer Inc.', 'Boston Scientific Corporation', 'Covidien Sales LLC', 'Stryker Corporation', 'SANOFI-AVENTIS U.S. LLC', 'AstraZeneca Pharmaceuticals LP', 'Genentech USA, Inc.', 'AbbVie, Inc.']

Dispute_Status_for_Publication

ObjectDType- Null values

- 0 (0.0%)

- Unique values

- 2 (< 0.1%)

Most frequent values

No

Yes

['No', 'Yes']

Name_of_Associated_Covered_Device_or_Medical_Supply1

ObjectDType- Null values

- 43,088 (58.6%)

- Unique values

-

4,372 (5.9%)

This column has a high cardinality (> 40).

Most frequent values

Vascular

Spine

ARTHREX PRODUCT LINE DISTAL EXTREMITY ARTHROSCOPY

Surgical

ALL ARTHREX PRODUCT LINES

LifeVest

Spinal Cord Neurostimulation - Neuro

Da Vinci Surgical System

PAIN MANAGEMENT

List:Interventional Therapies

['Vascular', 'Spine', 'ARTHREX PRODUCT LINE DISTAL EXTREMITY ARTHROSCOPY', 'Surgical', 'ALL ARTHREX PRODUCT LINES', 'LifeVest', 'Spinal Cord Neurostimulation - Neuro', 'Da Vinci Surgical System', 'PAIN MANAGEMENT', 'Interventional Therapies']

Name_of_Associated_Covered_Drug_or_Biological1

ObjectDType- Null values

- 36,233 (49.3%)

- Unique values

-

2,262 (3.1%)

This column has a high cardinality (> 40).

Most frequent values

Invokana

Xarelto

NON-PRODUCT

Prolia

BUTRANS

NON BRAND

No Product

Nesina

ELIQUIS

List:Zytiga

['Invokana', 'Xarelto', 'NON-PRODUCT', 'Prolia', 'BUTRANS', 'NON BRAND', 'No Product', 'Nesina', 'ELIQUIS', 'Zytiga']

Physician_Specialty

ObjectDType- Null values

- 3,996 (5.4%)

- Unique values

-

513 (0.7%)

This column has a high cardinality (> 40).

Most frequent values

Allopathic & Osteopathic Physicians|Internal Medicine

Other Service Providers|Specialist

Allopathic & Osteopathic Physicians|Surgery

Allopathic & Osteopathic Physicians|Family Medicine

Allopathic & Osteopathic Physicians|Orthopaedic Surgery

Allopathic & Osteopathic Physicians|Internal Medicine|Cardiovascular Disease

Allopathic & Osteopathic Physicians|Pediatrics

Allopathic & Osteopathic Physicians|Radiology|Diagnostic Radiology

Allopathic & Osteopathic Physicians|Obstetrics & Gynecology

List:Student, Health Care|Student in an Organized Health Care Education/Training Program

['Allopathic & Osteopathic Physicians|Internal Medicine', 'Other Service Providers|Specialist', 'Allopathic & Osteopathic Physicians|Surgery', 'Allopathic & Osteopathic Physicians|Family Medicine', 'Allopathic & Osteopathic Physicians|Orthopaedic Surgery', 'Allopathic & Osteopathic Physicians|Internal Medicine|Cardiovascular Disease', 'Allopathic & Osteopathic Physicians|Pediatrics', 'Allopathic & Osteopathic Physicians|Radiology|Diagnostic Radiology', 'Allopathic & Osteopathic Physicians|Obstetrics & Gynecology', 'Student, Health Care|Student in an Organized Health Care Education/Training Program']

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

| Column 1 | Column 2 | Cramér's V | Pearson's Correlation |

|---|---|---|---|

| Name_of_Associated_Covered_Device_or_Medical_Supply1 | Name_of_Associated_Covered_Drug_or_Biological1 | 0.263 | |

| Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | Name_of_Associated_Covered_Drug_or_Biological1 | 0.214 | |

| Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | Name_of_Associated_Covered_Device_or_Medical_Supply1 | 0.132 | |

| Name_of_Associated_Covered_Device_or_Medical_Supply1 | Physician_Specialty | 0.0962 | |

| Dispute_Status_for_Publication | Physician_Specialty | 0.0960 | |

| Dispute_Status_for_Publication | Name_of_Associated_Covered_Drug_or_Biological1 | 0.0895 | |

| Name_of_Associated_Covered_Drug_or_Biological1 | Physician_Specialty | 0.0646 | |

| Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | Physician_Specialty | 0.0510 | |

| Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | Dispute_Status_for_Publication | 0.0308 | |

| Dispute_Status_for_Publication | Name_of_Associated_Covered_Device_or_Medical_Supply1 | 0.0284 |

Please enable javascript

The skrub table reports need javascript to display correctly. If you are displaying a report in a Jupyter notebook and you see this message, you may need to re-execute the cell or to trust the notebook (button on the top right or "File > Trust notebook").

| status | |

|---|---|

| 0 | disallowed |

| 1 | disallowed |

| 2 | disallowed |

| 3 | disallowed |

| 4 | disallowed |

| 73,553 | allowed |

| 73,554 | allowed |

| 73,555 | allowed |

| 73,556 | allowed |

| 73,557 | allowed |

status

ObjectDType- Null values

- 0 (0.0%)

- Unique values

- 2 (< 0.1%)

Most frequent values

disallowed

allowed

['disallowed', 'allowed']

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

|

Column

|

Column name

|

dtype

|

Is sorted

|

Null values

|

Unique values

|

Mean

|

Std

|

Min

|

Median

|

Max

|

|---|---|---|---|---|---|---|---|---|---|---|

| 0 | status | ObjectDType | True | 0 (0.0%) | 2 (< 0.1%) |

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

status

ObjectDType- Null values

- 0 (0.0%)

- Unique values

- 2 (< 0.1%)

Most frequent values

disallowed

allowed

['disallowed', 'allowed']

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

Please enable javascript

The skrub table reports need javascript to display correctly. If you are displaying a report in a Jupyter notebook and you see this message, you may need to re-execute the cell or to trust the notebook (button on the top right or "File > Trust notebook").

Looking at the distributions of the target, we observe that this classification task is quite imbalanced. It means that we have to be careful when selecting a set of statistical metrics to evaluate the classification performance of our predictive model. In addition, we see that the class labels are not specified by an integer 0 or 1 but instead by a string “allowed” or “disallowed”.

For our application, the label of interest is “allowed”.

Before training a predictive model, we need to split our dataset into a training and a validation set.

from skore import train_test_split

# If you have many dataframes to split on, you can always ask train_test_split to return

# a dictionary. Remember, it needs to be passed as a keyword argument!

split_data = train_test_split(X=df, y=y, random_state=42, as_dict=True)

╭───────────────────────────── HighClassImbalanceWarning ──────────────────────────────╮

│ It seems that you have a classification problem with a high class imbalance. In this │

│ case, using train_test_split may not be a good idea because of high variability in │

│ the scores obtained on the test set. To tackle this challenge we suggest to use │

│ skore's CrossValidationReport with the `splitter` parameter of your choice. │

╰──────────────────────────────────────────────────────────────────────────────────────╯

╭───────────────────────────────── ShuffleTrueWarning ─────────────────────────────────╮

│ We detected that the `shuffle` parameter is set to `True` either explicitly or from │

│ its default value. In case of time-ordered events (even if they are independent), │

│ this will result in inflated model performance evaluation because natural drift will │

│ not be taken into account. We recommend setting the shuffle parameter to `False` in │

│ order to ensure the evaluation process is really representative of your production │

│ release process. │

╰──────────────────────────────────────────────────────────────────────────────────────╯

By the way, notice how skore’s train_test_split() automatically warns us

for a class imbalance.

Now, we need to define a predictive model. Hopefully, skrub provides a convenient

function (skrub.tabular_pipeline()) when it comes to getting strong baseline

predictive models with a single line of code. As its feature engineering is generic,

it does not provide some handcrafted and tailored feature engineering but still

provides a good starting point.

So let’s create a classifier for our task.

from skrub import tabular_pipeline

estimator = tabular_pipeline("classifier")

estimator

Pipeline(steps=[('tablevectorizer',

TableVectorizer(low_cardinality=ToCategorical())),

('histgradientboostingclassifier',

HistGradientBoostingClassifier())])In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook. On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.

Parameters

Parameters

| cardinality_threshold | 40 | |

| low_cardinality | ToCategorical() | |

| high_cardinality | StringEncoder() | |

| numeric | PassThrough() | |

| datetime | DatetimeEncoder() | |

| specific_transformers | () | |

| drop_null_fraction | 1.0 | |

| drop_if_constant | False | |

| drop_if_unique | False | |

| datetime_format | None | |

| null_strings | None | |

| n_jobs | None |

Parameters

Parameters

| resolution | 'hour' | |

| add_weekday | False | |

| add_total_seconds | True | |

| add_day_of_year | False | |

| periodic_encoding | None |

Parameters

Parameters

| n_components | 30 | |

| vectorizer | 'tfidf' | |

| ngram_range | (3, ...) | |

| analyzer | 'char_wb' | |

| stop_words | None | |

| random_state | None | |

| vocabulary | None |

Parameters

Getting insights from our estimator#

Introducing the skore.EstimatorReport class#

Now, we would be interested in getting some insights from our predictive model.

One way is to use the skore.EstimatorReport class. This constructor will

detect that our estimator is unfitted and will fit it for us on the training data.

from skore import EstimatorReport

report = EstimatorReport(estimator, **split_data, pos_label=pos_label)

report

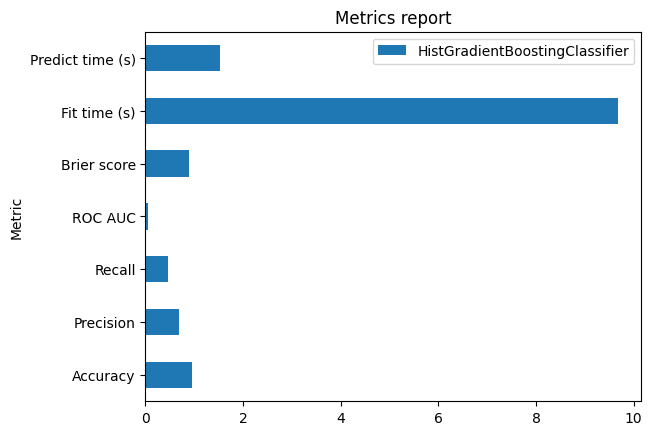

| Metric | HistGradientBoostingClassifier |

|---|---|

| Accuracy | 0.951985 |

| Precision | 0.682231 |

| Recall | 0.451890 |

| ROC AUC | 0.941697 |

| Log loss | 0.124163 |

| Brier score | 0.035026 |

| Fit time (s) | 9.464977 |

| Predict time (s) | 1.542027 |

Pipeline(steps=[('tablevectorizer',

TableVectorizer(low_cardinality=ToCategorical())),

('histgradientboostingclassifier',

HistGradientBoostingClassifier())])In a Jupyter environment, please rerun this cell to show the HTML representation or trust the notebook. On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.

Parameters

Parameters

| cardinality_threshold | 40 | |

| low_cardinality | ToCategorical() | |

| high_cardinality | StringEncoder() | |

| numeric | PassThrough() | |

| datetime | DatetimeEncoder() | |

| specific_transformers | () | |

| drop_null_fraction | 1.0 | |

| drop_if_constant | False | |

| drop_if_unique | False | |

| datetime_format | None | |

| null_strings | None | |

| n_jobs | None |

[]

Parameters

[]

Parameters

| resolution | 'hour' | |

| add_weekday | False | |

| add_total_seconds | True | |

| add_day_of_year | False | |

| periodic_encoding | None |

['Dispute_Status_for_Publication']

Parameters

['Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name', 'Name_of_Associated_Covered_Device_or_Medical_Supply1', 'Name_of_Associated_Covered_Drug_or_Biological1', 'Physician_Specialty']

Parameters

| n_components | 30 | |

| vectorizer | 'tfidf' | |

| ngram_range | (3, ...) | |

| analyzer | 'char_wb' | |

| stop_words | None | |

| random_state | None | |

| vocabulary | None |

Parameters

| Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | Dispute_Status_for_Publication | Name_of_Associated_Covered_Device_or_Medical_Supply1 | Name_of_Associated_Covered_Drug_or_Biological1 | Physician_Specialty | status | |

|---|---|---|---|---|---|---|

| 0 | KARLSTORZ Endoscopy-America | No | ENT | Allopathic & Osteopathic Physicians|Surgery | disallowed | |

| 1 | Merge Healthcare Incorporated | No | Allopathic & Osteopathic Physicians|Radiology|Diagnostic Radiology | disallowed | ||

| 2 | The Spectranetics Corporation | No | TURBOELITE | Allopathic & Osteopathic Physicians|General Practice | disallowed | |

| 3 | AstraZeneca Pharmaceuticals LP | No | CRESTOR | Other Service Providers|Contractor | disallowed | |

| 4 | Upsher-Smith Laboratories Inc. | No | Allopathic & Osteopathic Physicians|Internal Medicine|Infectious Disease | disallowed | ||

| 73,553 | Merck Sharp & Dohme Corporation | No | NOXAFIL | Allopathic & Osteopathic Physicians|Pediatrics|Pediatric Hematology-Oncology | allowed | |

| 73,554 | Amarin Pharma Inc. | No | Vascepa | Other Service Providers|Medical Genetics, Ph.D. Medical Genetics | disallowed | |

| 73,555 | Sunovion Pharmaceuticals Inc. | No | Zetonna | Allopathic & Osteopathic Physicians|Radiology|Diagnostic Radiology | disallowed | |

| 73,556 | Arthrex, Inc. | No | ALL ARTHREX PRODUCT LINES | Allopathic & Osteopathic Physicians|Surgery | disallowed | |

| 73,557 | Henry Schein, Inc. | No | Chiropractic Providers|Chiropractor | disallowed |

Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name

ObjectDType- Null values

- 0 (0.0%)

- Unique values

-

1,466 (2.0%)

This column has a high cardinality (> 40).

Dispute_Status_for_Publication

ObjectDType- Null values

- 0 (0.0%)

- Unique values

- 2 (< 0.1%)

Name_of_Associated_Covered_Device_or_Medical_Supply1

ObjectDType- Null values

- 43,088 (58.6%)

- Unique values

-

4,372 (5.9%)

This column has a high cardinality (> 40).

Name_of_Associated_Covered_Drug_or_Biological1

ObjectDType- Null values

- 36,233 (49.3%)

- Unique values

-

2,262 (3.1%)

This column has a high cardinality (> 40).

Physician_Specialty

ObjectDType- Null values

- 3,996 (5.4%)

- Unique values

-

513 (0.7%)

This column has a high cardinality (> 40).

status

ObjectDType- Null values

- 0 (0.0%)

- Unique values

- 2 (< 0.1%)

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

|

Column

|

Column name

|

dtype

|

Is sorted

|

Null values

|

Unique values

|

Mean

|

Std

|

Min

|

Median

|

Max

|

|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Applicable_Manufacturer_or_Applicable_GPO_Making_Payment_Name | ObjectDType | False | 0 (0.0%) | 1466 (2.0%) | |||||

| 1 | Dispute_Status_for_Publication | ObjectDType | False | 0 (0.0%) | 2 (< 0.1%) | |||||

| 2 | Name_of_Associated_Covered_Device_or_Medical_Supply1 | ObjectDType | False | 43088 (58.6%) | 4372 (5.9%) | |||||

| 3 | Name_of_Associated_Covered_Drug_or_Biological1 | ObjectDType | False | 36233 (49.3%) | 2262 (3.1%) | |||||

| 4 | Physician_Specialty | ObjectDType | False | 3996 (5.4%) | 513 (0.7%) | |||||

| 5 | status | ObjectDType | False | 0 (0.0%) | 2 (< 0.1%) |

No columns match the selected filter: . You can change the column filter in the dropdown menu above.

Please enable javascript

The skrub table reports need javascript to display correctly. If you are displaying a report in a Jupyter notebook and you see this message, you may need to re-execute the cell or to trust the notebook (button on the top right or "File > Trust notebook").

Once the report is created, we get some information regarding the available tools

allowing us to get some insights from our specific model on our specific task by

calling the help() method.

Be aware that we can access the help for each individual sub-accessor. For instance:

report.metrics.help()

Metrics computation with aggressive caching#

At this point, we might be interested to have a first look at the statistical

performance of our model on the validation set that we provided. We can access it

by calling any of the metrics displayed above. Since we are greedy, we want to get

several metrics at once and we will use the

summarize() method.

import time

start = time.time()

metric_report = report.metrics.summarize().frame()

end = time.time()

metric_report

| HistGradientBoostingClassifier | |

|---|---|

| Metric | |

| Accuracy | 0.951985 |

| Precision | 0.682231 |

| Recall | 0.451890 |

| ROC AUC | 0.941697 |

| Log loss | 0.124163 |

| Brier score | 0.035026 |

| Fit time (s) | 9.464977 |

| Predict time (s) | 1.542027 |

Time taken to compute the metrics: 0.00 seconds

An interesting feature provided by the skore.EstimatorReport is the

the caching mechanism. Indeed, when we have a large enough dataset, computing the

predictions for a model is not cheap anymore. For instance, on our smallish dataset,

it took a couple of seconds to compute the metrics. The report will cache the

predictions and if we are interested in computing a metric again or an alternative

metric that requires the same predictions, it will be faster. Let’s check by

requesting the same metrics report again.

start = time.time()

metric_report = report.metrics.summarize().frame()

end = time.time()

metric_report

| HistGradientBoostingClassifier | |

|---|---|

| Metric | |

| Accuracy | 0.951985 |

| Precision | 0.682231 |

| Recall | 0.451890 |

| ROC AUC | 0.941697 |

| Log loss | 0.124163 |

| Brier score | 0.035026 |

| Fit time (s) | 9.464977 |

| Predict time (s) | 1.542027 |

Time taken to compute the metrics: 0.00 seconds

Note that when the model is fitted or the predictions are computed, we additionally store the time the operation took:

report.metrics.timings()

{'fit_time': 9.464977432000069, 'predict_time_test': 1.542026749999991, 'predict_time_train': 4.546076176000042}

Since we obtain a pandas dataframe, we can also use the plotting interface of pandas.

ax = metric_report.plot.barh()

_ = ax.set_title("Metrics report")

Whenever computing a metric, we check if the predictions are available in the cache and reload them if available. So for instance, let’s compute the log loss.

0.12416306414423657

Time taken to compute the log loss: 0.00 seconds

We can show that without initial cache, it would have taken more time to compute the log loss.

0.12416306414423657

Time taken to compute the log loss: 4.73 seconds

By default, the metrics are computed on the test set only. However, if a training set

is provided, we can also compute the metrics by specifying the data_source

parameter.

0.09999389347526483

Be aware that we can also benefit from the caching mechanism with our own custom

metrics. Skore only expects that we define our own metric function to take y_true

and y_pred as the first two positional arguments. It can take any other arguments.

Let’s see an example.

def operational_decision_cost(y_true, y_pred, amount):

mask_true_positive = (y_true == pos_label) & (y_pred == pos_label)

mask_true_negative = (y_true == neg_label) & (y_pred == neg_label)

mask_false_positive = (y_true == neg_label) & (y_pred == pos_label)

mask_false_negative = (y_true == pos_label) & (y_pred == neg_label)

fraudulent_refuse = mask_true_positive.sum() * 50

fraudulent_accept = -amount[mask_false_negative].sum()

legitimate_refuse = mask_false_positive.sum() * -5

legitimate_accept = (amount[mask_true_negative] * 0.02).sum()

return fraudulent_refuse + fraudulent_accept + legitimate_refuse + legitimate_accept

In our use case, we have a operational decision to make that translate the classification outcome into a cost. It translate the confusion matrix into a cost matrix based on some amount linked to each sample in the dataset that are provided to us. Here, we randomly generate some amount as an illustration.

import numpy as np

from sklearn.metrics import make_scorer

rng = np.random.default_rng(42)

amount = rng.integers(low=100, high=1000, size=len(split_data["y_test"]))

report.metrics.add(metric=make_scorer(operational_decision_cost, amount=amount))

cost = report.metrics.summarize(metric="operational_decision_cost")

cost.frame()

| HistGradientBoostingClassifier | |

|---|---|

| Metric | |

| Operational Decision Cost | -136140.34 |

By the way, skore caches the model predictions. It is really handy because it means that we can compute some additional metrics without having to recompute the the predictions.

report.metrics.summarize(

metric=["precision", "recall", "operational_decision_cost"]

).frame()

| HistGradientBoostingClassifier | |

|---|---|

| Metric | |

| Precision | 0.682231 |

| Recall | 0.451890 |

| Operational Decision Cost | -136140.340000 |

Effortless one-liner plotting#

The skore.EstimatorReport class also implements a number of the most common

data science plots.

As for the metrics, we only provide the meaningful set of plots for the provided

estimator.

report.metrics.help()

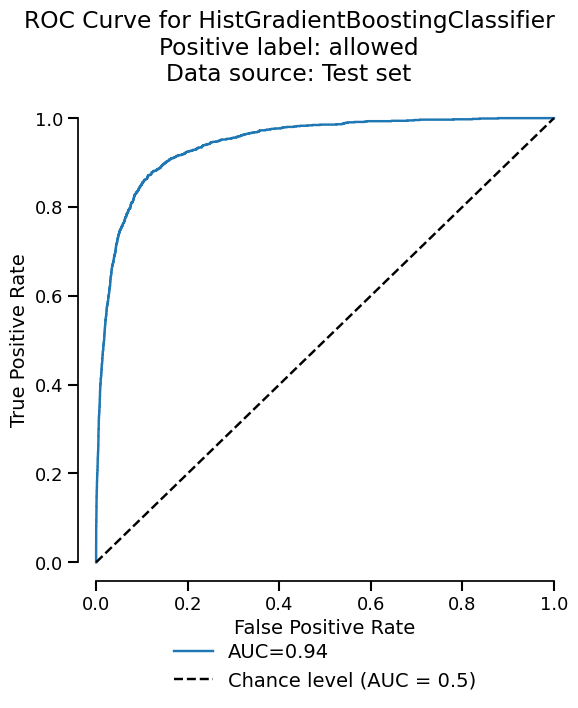

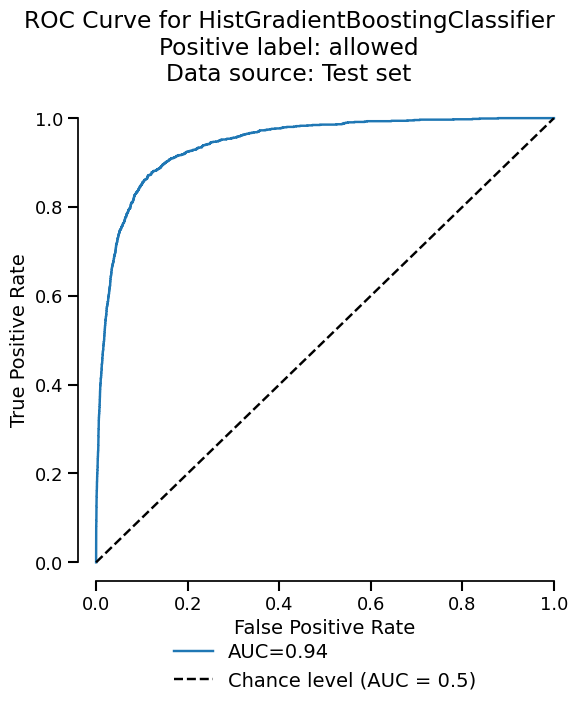

Let’s start by plotting the ROC curve for our binary classification task.

display = report.metrics.roc()

display.plot()

<Figure size 600x750 with 1 Axes>

The plot functionality is built upon the scikit-learn display objects. We return

those display (slightly modified to improve the UI) in case we want to tweak some

of the plot properties. We can have quick look at the available attributes and

methods by calling the help method or simply by printing the display.

fig = display.plot()

fig.axes[0].set_title("Example of a ROC curve")

fig

<Figure size 600x750 with 1 Axes>

Similarly to the metrics, we aggressively use the caching to avoid recomputing the predictions of the model. We also cache the plot display object by detection if the input parameters are the same as the previous call. Let’s demonstrate the kind of performance gain we can get.

<Figure size 600x750 with 1 Axes>

Time taken to compute the ROC curve: 0.10 seconds

Now, let’s clean the cache and check if we get a slowdown.

<Figure size 600x750 with 1 Axes>

Time taken to compute the ROC curve: 4.87 seconds

As expected, since we need to recompute the predictions, it takes more time.

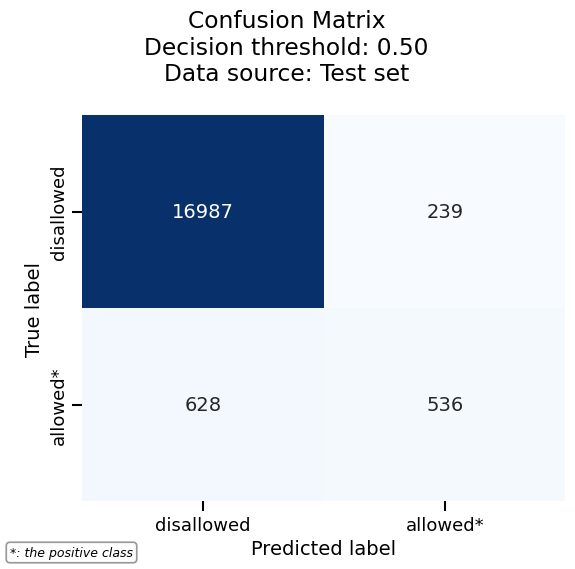

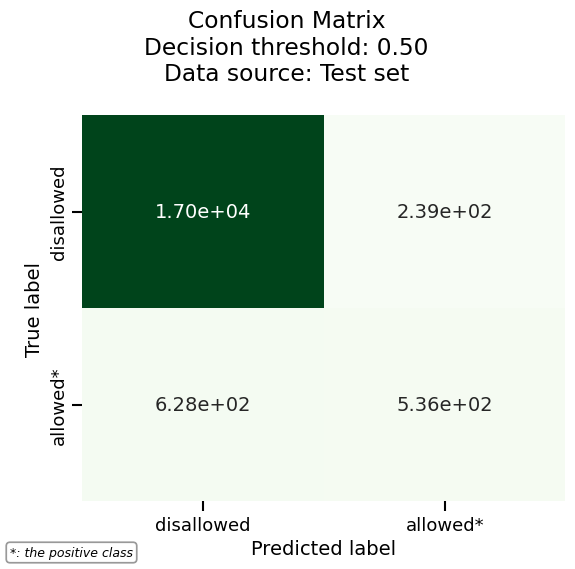

Visualizing the confusion matrix#

Another useful visualization for classification tasks is the confusion matrix, which shows the counts of correct and incorrect predictions for each class.

Let’s first start with a basic confusion matrix:

cm_display = report.metrics.confusion_matrix()

cm_display.plot()

<Figure size 600x600 with 1 Axes>

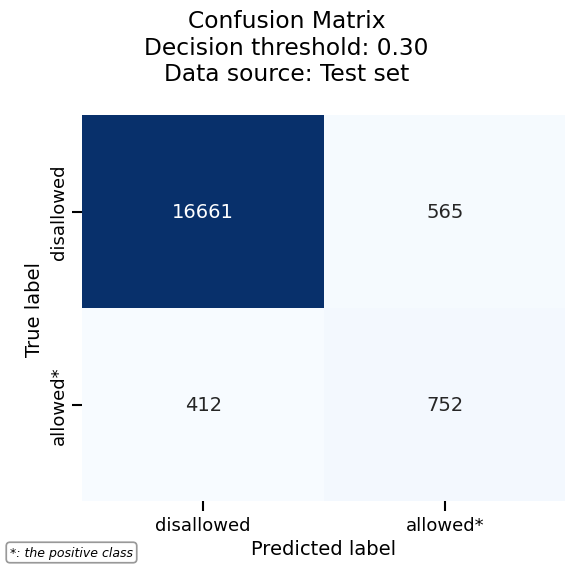

In binary classification, a confusion matrix depends on the decision threshold used to convert predicted probabilities into class labels. By default, skore uses a threshold of 0.5, but confusion matrices are actually computed at every threshold internally.

# To visualize the confusion matrix at a different threshold, use the

# ``threshold_value`` parameter. For example, a threshold of 0.3 will classify

# more samples as positive:

cm_display.plot(threshold_value=0.3)

<Figure size 600x600 with 1 Axes>

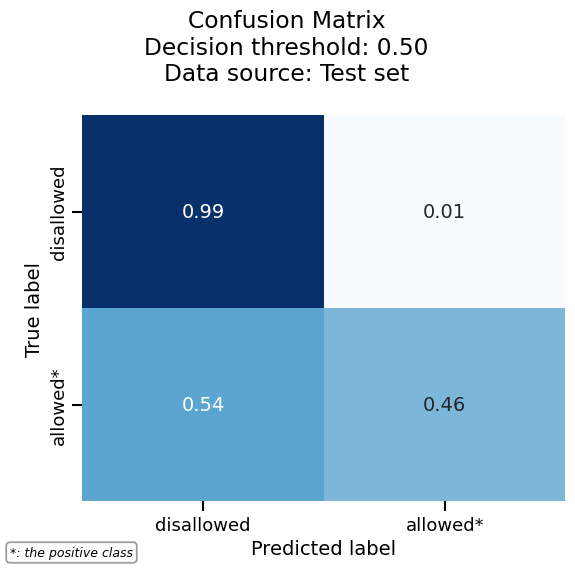

We can normalize the confusion matrix to get percentages instead of raw counts. Here we normalize by true labels (rows):

cm_display.plot(normalize="true")

<Figure size 600x600 with 1 Axes>

More plotting options are available via heatmap_kwargs, which are passed to

seaborn’s heatmap. For example, we can customize the colormap and number format:

cm_display.set_style(heatmap_kwargs={"cmap": "Greens", "fmt": ".2e"})

cm_display.plot()

<Figure size 600x600 with 1 Axes>

Finally, the confusion matrix can also be exported as a pandas DataFrame for further analysis:

| true_label | predicted_label | value | split | estimator | data_source | |

|---|---|---|---|---|---|---|

| 0 | allowed | allowed | 526 | NaN | HistGradientBoostingClassifier | test |

| 1 | allowed | disallowed | 638 | NaN | HistGradientBoostingClassifier | test |

| 2 | disallowed | allowed | 245 | NaN | HistGradientBoostingClassifier | test |

| 3 | disallowed | disallowed | 16981 | NaN | HistGradientBoostingClassifier | test |

See also

For using the EstimatorReport to inspect your models,

see EstimatorReport: Inspecting your models with the feature importance.

Total running time of the script: (0 minutes 57.331 seconds)